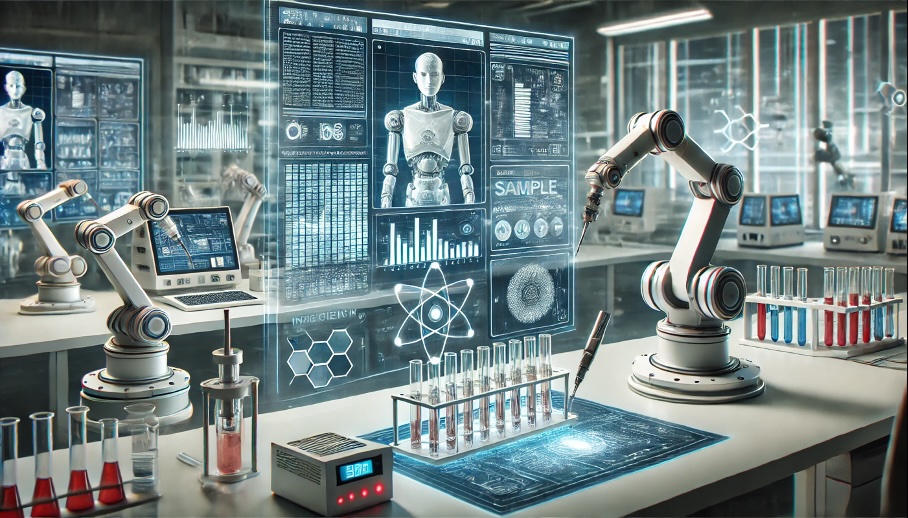

Brett Adcock, known for founding Figure AI, is back with a new project called Hark, and he’s going all in. Backed by $100 million of his own money, the goal is to build what he calls “the most advanced personal intelligence in the world.” Instead of launching a single product, Hark is aiming to build an entire AI system from scratch.

What makes Hark stand out is how broad the approach is. Rather than focusing on just software or models, the team is working on everything at once — foundation models, software, and custom-built hardware, all designed to work together as one system. The team itself is stacked, with talent coming from companies like Apple, Google, Tesla, and Amazon. On top of that, the timeline is aggressive, with plans to deploy large-scale GPU infrastructure soon and release their first models within months, followed by dedicated hardware shortly after.

Max's Opinion

This is one of those projects that feels super ambitious right from the start. Building everything at once instead of focusing on one area is risky, but it could also be what makes it stand out. The timeline seems really fast though, so the real question is whether they can actually deliver on all of this.